A main tool Intrepid feature is our Housing Value Impact Modeler. The tool helps people understand how prices co-move in neighboring cities, but it does…

Leave a CommentIntrepid Insight Posts

Because of some server issues on our side, our orientation and mobility goal bank created jointly with Cane and Compass was done for a little…

Leave a CommentIntrepid’s report on Culver City Fire Response Times uses a quantile regression model (or “median regression” since we look at the 50th quantile). This blog…

Leave a CommentI absolutely love riddles. A few of my favorites include the Hobbits In a Line, 20 coins, and 5 Pirates. YouTube seems to have been…

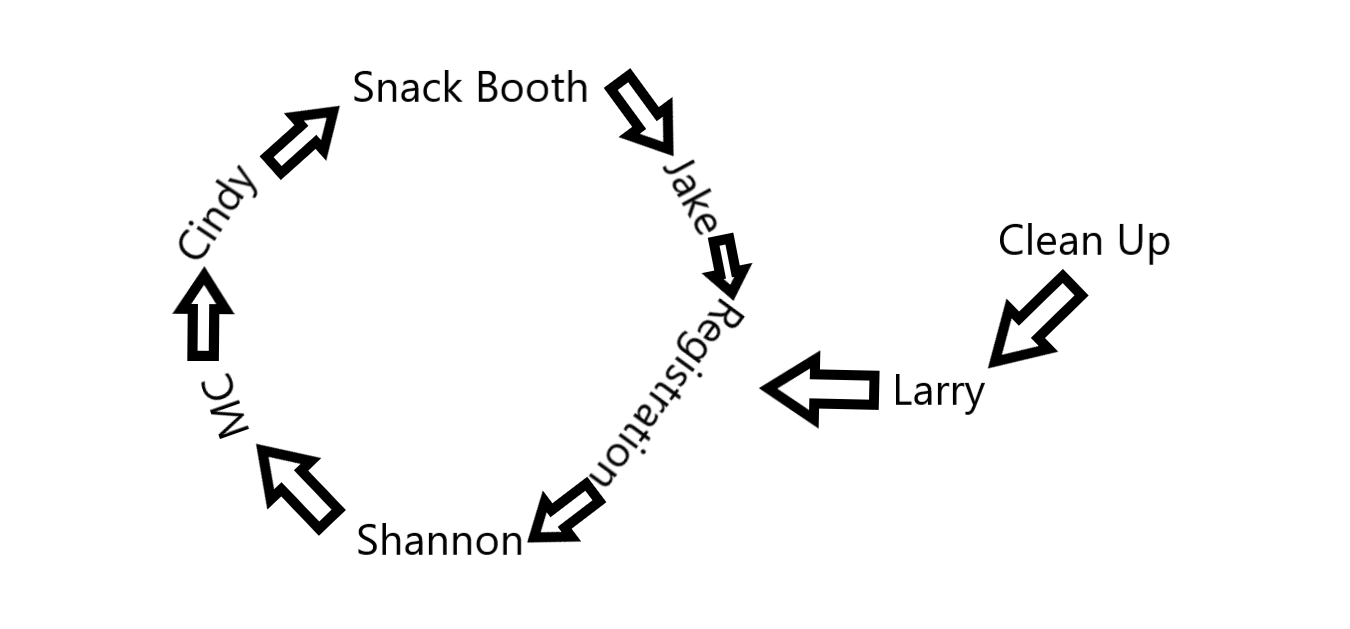

Leave a CommentOur latest tool “The Efficient Volunteer Matching Applet” is now available on our website: It was also featured in a blog post I wrote for…

Leave a CommentSurprisingly, data cleaning is quite time intensive and often not something that can be automated. In my experience, the skill set that makes someone good or bad at data cleaning is not exactly the same as the skill set that makes someone a good researcher or a good analyst. There is overlap of course – creativity is helpful in overcoming data cleaning challenges and in developing analytical models. But being a good analyst often requires one to be alert to the big picture, and to be able to make simplifying assumptions when necessary, whereas data cleaning is all about the odd cases – in fact I think I have spent the vast majority of my time dealing with the 0.1% of observations that do not quite look like the other 99.9%.

Leave a CommentIntroduction In the past weeks, the World Bank released new Enterprise Survey Data for 2018. This new data follows the same format as the…

Leave a CommentIntroduction According to the World Bank, Kenya is classified as a lower-middle-income economy with a gross national income of $1,460 ( USD). As such, it…

Leave a CommentWhether a non-profit is a small organization working on a niche issue or a multinational giant working to end global poverty, I believe the big problem facing its administrators can be summed up this way: “How do we generate the most ________ given ______?” The first blank can be filled in with a metric of success for the organization. Things like “awareness,” “support,” “donations,” “participation,” etc.

The second blank can be filled in with whatever the relevant operating budget is for the program. Whether that number is $100 or $100 million is really irrelevant – the point is that non-profits have a finite amount of resources to accomplish a goal.

While there is a massive literature in economics, finance and management on how to maximize profit, there is not a ton of free resources on how to apply these intuitions to non-profits. The data and technical expertise required to perform these types of analyses also exceed the capacity of most small non-profits. As a result of these two factors, optimizing operation stratgies may seem like an impossible task for small organizations.

Leave a Comment